Hello,

I have shinyproxy [2.6.0] running in openshift (aka kubernetes). The issue I am having is when an application launches, and the image is not cached on the worker node, the image pull time is long enough that the pod throws a timeout error. The image is coming from an image repo.

Error

Status code: 500

Message: Container failed to start

Caused by: eu.openanalytics.containerproxy.ContainerProxyException: Container did not become ready in time

at eu.openanalytics.containerproxy.backend.kubernetes.KubernetesBackend.startContainer(KubernetesBackend.java:293)

at eu.openanalytics.containerproxy.backend.AbstractContainerBackend.doStartProxy(AbstractContainerBackend.java:163)

at eu.openanalytics.containerproxy.backend.AbstractContainerBackend.startProxy(AbstractContainerBackend.java:129)

at eu.openanalytics.containerproxy.service.ProxyService.startProxy(ProxyService.java:279)

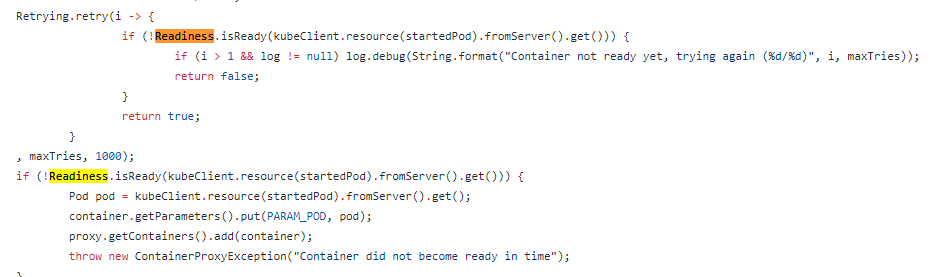

I thought increasing the container wait time would help this issue (container-wait-time: 200000), however, this time does not seem to be used before the container is running. Looking at the source code, it seems that this timeout is related to kubernetes internal ‘readiness’. Attached is the image of the relevant shinyproxy kubernetes backend code and the path to that code:

shinyproxy kubernetes backend java

This makes me believe there needs to be a pod patch for readiness/readiness probe/liveliness. Can anyone comment if this is correct? If it is, is there a sample of adding these as pod patches?